ELK Stack实用部署教程

Stitch-Zhang

An iddle programerELK Stack组件完整搭建及使其能分析内网DNS日志记录、Cicso日志...并将数据可视化于Kibana中

ELK8.0部署流程#

服务器列表#

服务器均满足以下条件

- 新增用户

elk用于软件的运行 - 软件包放置于

/soft目录 - 数据文件放置于

/data目录 - 日志放置于

/logs目录 - 确保

elk用户拥有以上目录的读写得权限

| IP | 配置 | 软件包 | 用途 |

|---|---|---|---|

| 10.26.211.110 | VM,4C8G | rsyslog filebeat | 接受并处理syslog日志 |

| 10.26.211.172 | VM,4C8G | rsyslog filebeat | 接受并处理syslog日志 |

| 10.26.211.166 | VM,4C8G | logstash redis | 数据缓存处理 |

| 10.26.211.167 | VM,4C8G | logstash redis | 数据缓存处理 |

| 10.26.211.168 | VM,4C8G | elasticsearch | 存储、分析节点 |

| 10.26.211.169 | VM,4C8G | elasticsearch | 存储、分析节点 |

| 10.26.211.170 | VM,4C8G | elasticsearch | 存储、分析节点 |

| 10.26.211.171 | VM,4C8G | elasticsearch kibana nginx | ingest节点、内容展示平台 |

| 10.26.246.60 | Phys,16C128G | filebeat logstash | TCPDUMP采集DNS日志 |

Elasticsearch#

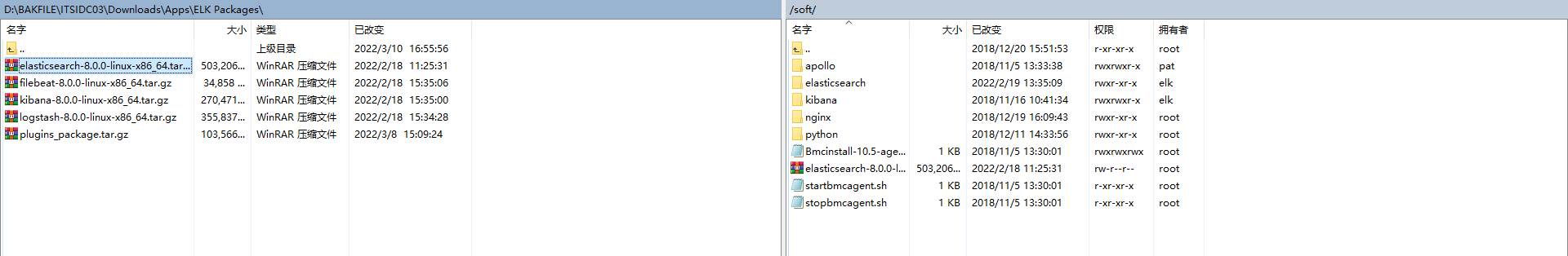

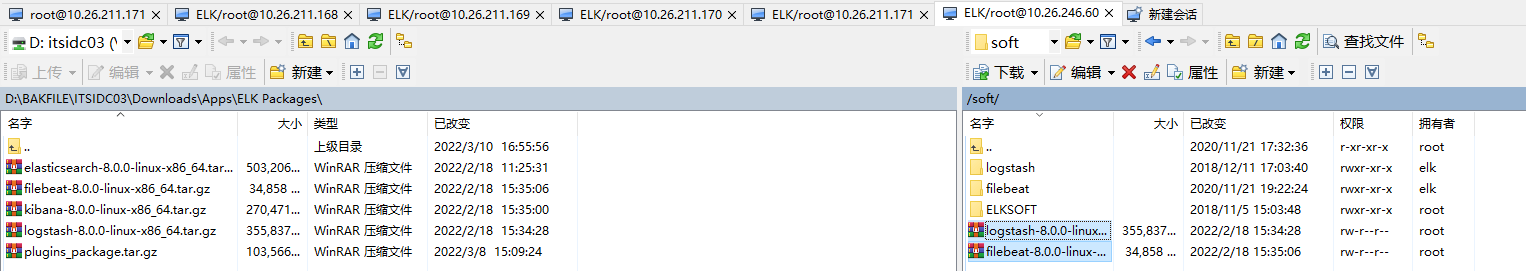

上传软件包#

使用WinSCP上传 软件包以下设备的/soft目录中

10.26.211.16810.26.211.16910.26.211.17010.26.211.171

解压缩软件包#

使用elk用户登录服务器

如果是root用户登录,可使用

su - elk进行切换elk用户

切换至/soft目录

解压缩软件包

更改文件夹名称

配置#

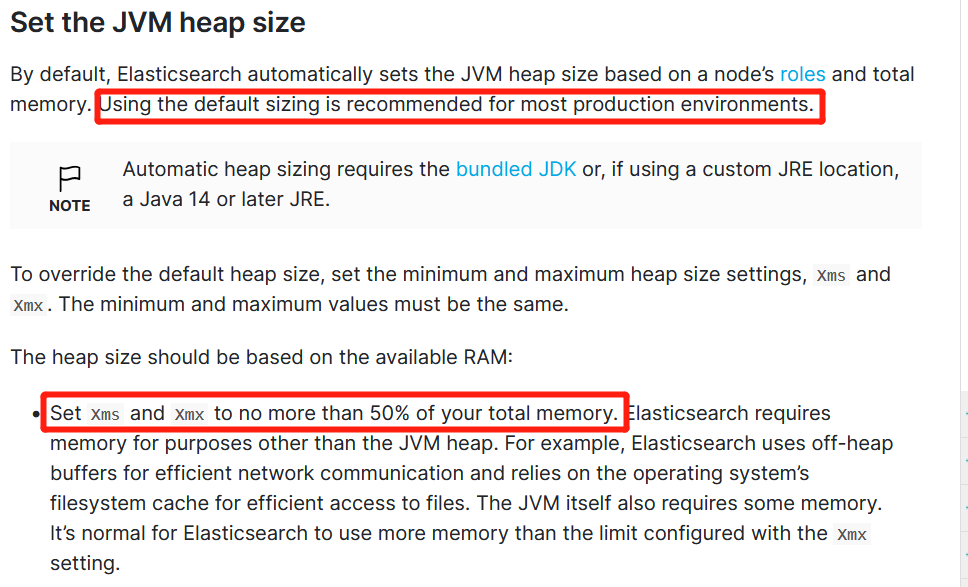

配置JVM堆内存大小#

注意#

官方文档建议:

- 生产环境中推荐使用默认配置

- 配置请勿超过内存资源的50%

配置最大为4G

配置Elasticsearch#

配置文件为yml请注意每个键值对中间存在空格

键: 值

集群名

节点名称

elasticsearch1 - elasticsearch4

数据目录

日志目录

配置IP

配置端口

*配置角色 (10.26.211.171)

默认配置为如下角色

masterdatadata_contentdata_hotdata_warmdata_colddata_frozeningestmlremote_cluster_clienttransform

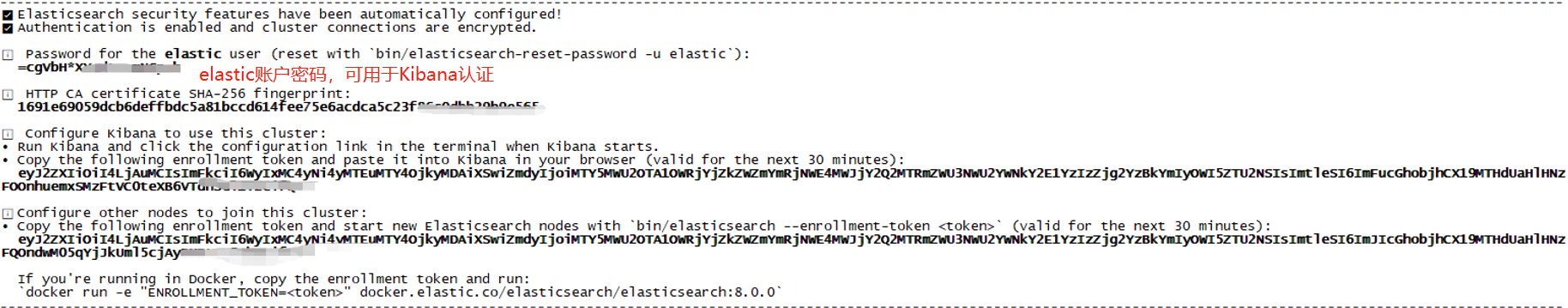

初始化集群#

可在数据节点服务器中选任意一个运行

如下将使用10.26.211.168

将会自自动配置安全功能,其中包括

- 自签发证书于

${elasticsearch_home}/config/certs目录 - 启用

xpack配置 - 初始化

elastic用户并设置密码 - 集群Token(enrollment token) 半小时有效

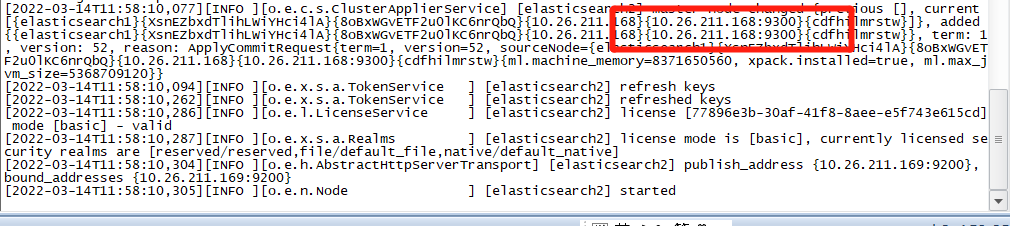

出现如下则表示集群初始化完成

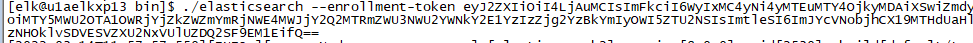

加入集群#

对以下服务器进行加入集群操作

- 10.26.211.169

- 10.26.211.170

- 10.26.211.171

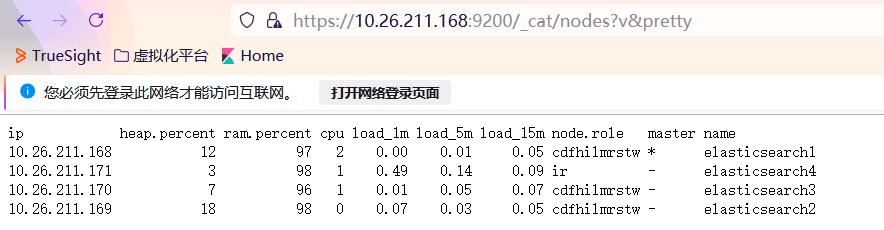

查询集群在线节点#

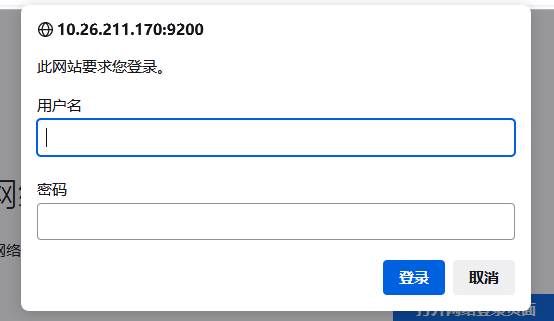

浏览器访问:https://10.26.211.168:9200/_cat/nodes?v&pretty

访问需要提供elastic账户和密码

密码为步骤时生成的

四个节点均在线

脚本启动#

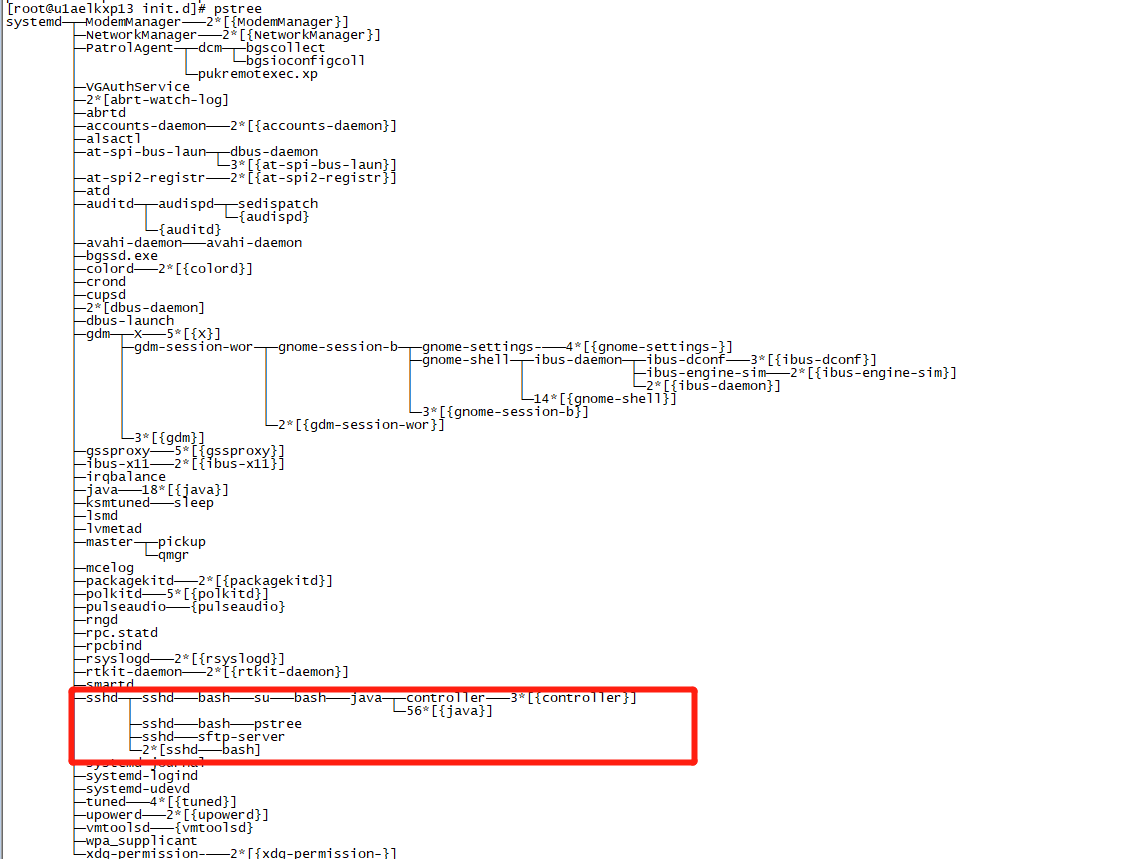

通过ssh连接服务器上启动的进程,其父进程为sshd ,当会话断开连接时,启动的进程将会被关闭

为了长久运行,通常采用配置服务文件通过systemctl启动或旧版service启动

通过service管理#

放置于/etc/init.d中

vi /etc/init.d/elasticsearch

需要使用root用户启动/停止

启动#

关闭#

状态#

重启#

Kibana#

将会使用本机10.26.211.171作为集群接入点

上传软件包#

上传 kibana-8.0.0-linux-x86_64.tar.gz 至 10.26.211.171 的/soft目录

解压缩#

使用elk用户进行操作

配置启、停、状、重#

基础配置#

绑定本地IP

配置中文

绑定端口

配置日志输出

需要创建日志目录,否则报错

mkdir /logs/kibana8

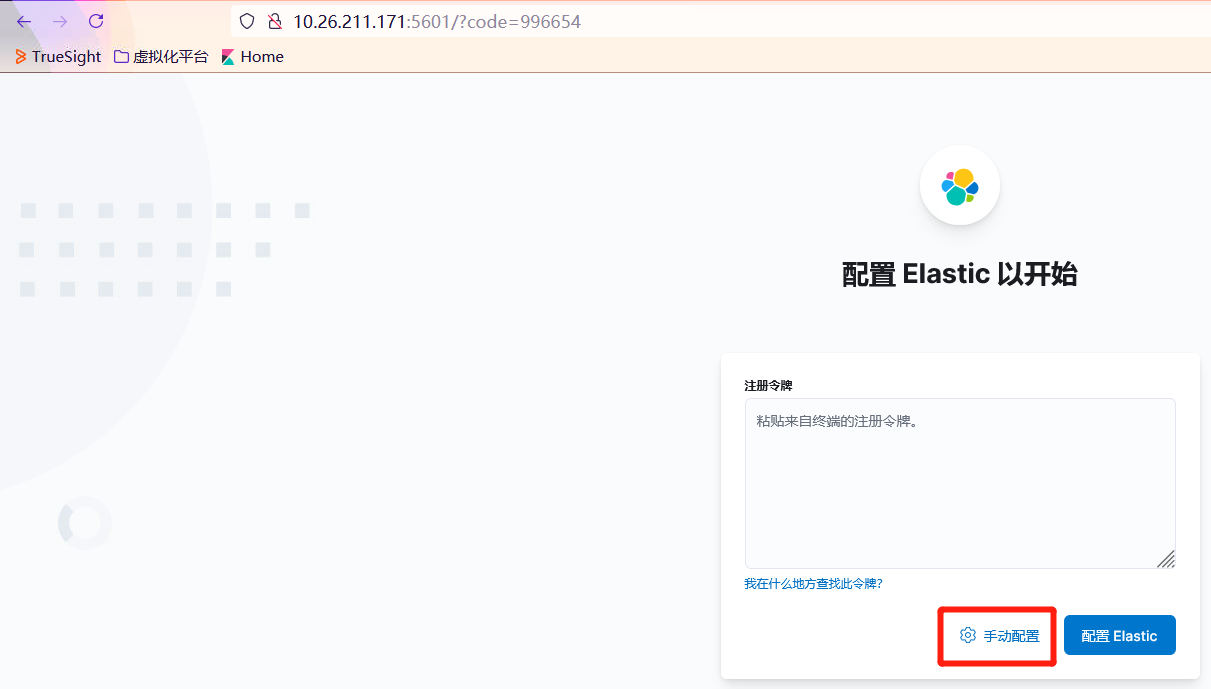

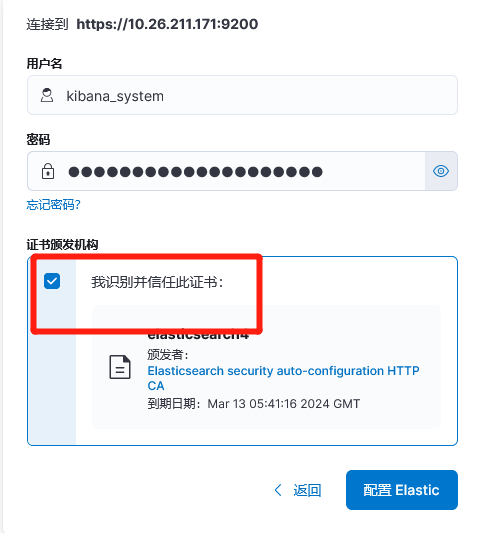

网页配置#

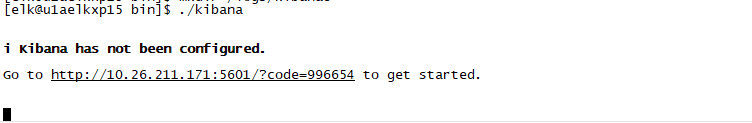

运行#

浏览器打开http://10.26.211.171:5601/?code=996654进行网页配置

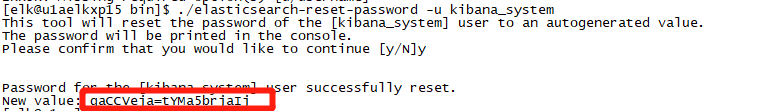

重置kibana_system密码#

脚本启动#

启、停、状、重#

DNS日志集成#

服务器:10.26.246.60

日志文件位于:/logs/dnsqtime/

单条日志形如

日志生成到日志发送到Elasticsearch流程

TCPDUMP -> logger -> Filebeat -> Logstash -> Elasticsearch

部署FileBeat & Logstash#

上传软件#

解压缩#

重命名#

FileBeat#

Input -> Filter -> Output

配置#

DNS日志输入文件流#

Filebeat程序日志#

启用模块#

预处理#

将数据输出到本地Logstash#

配置Kibana端点#

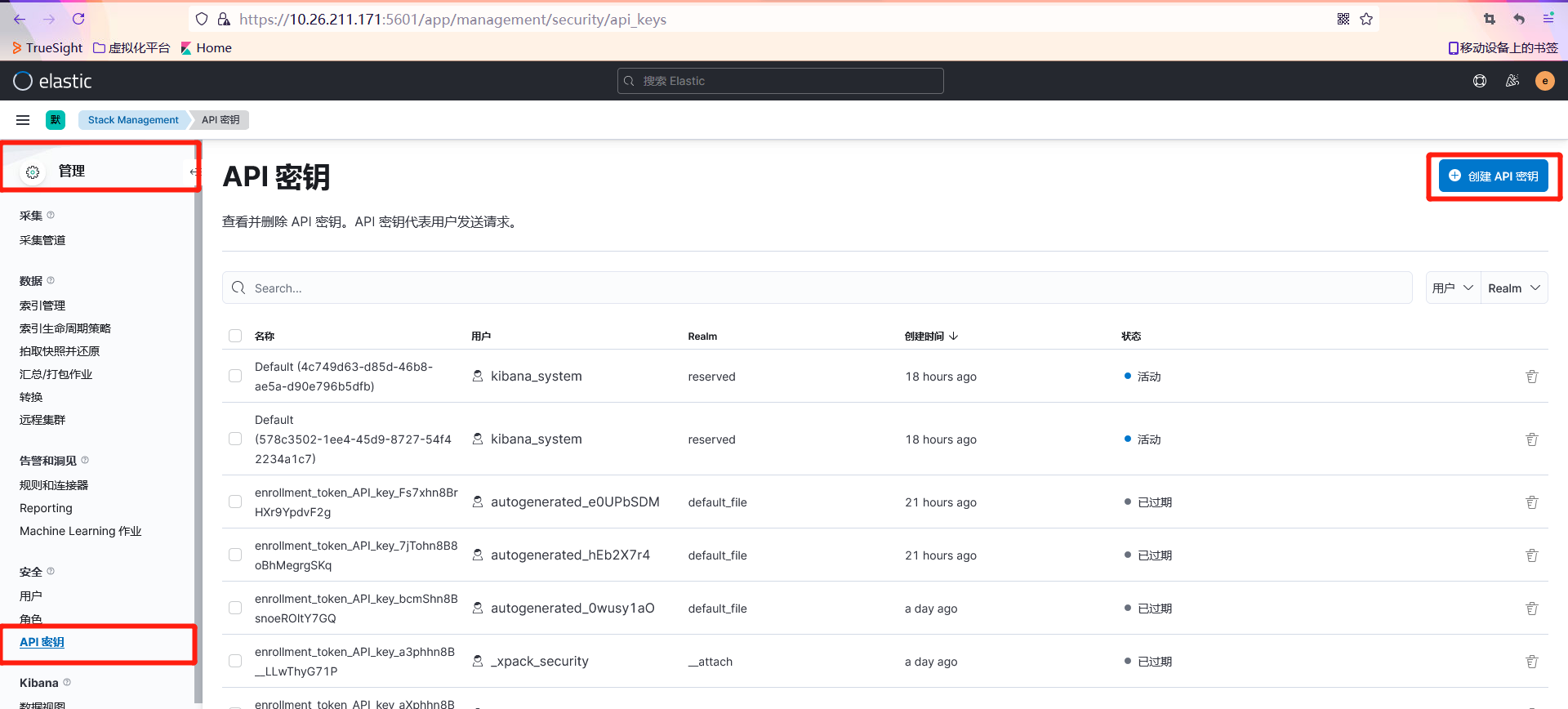

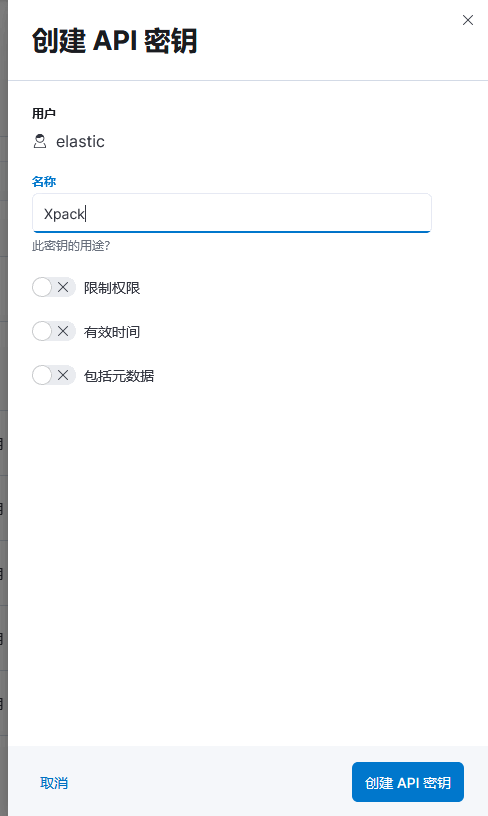

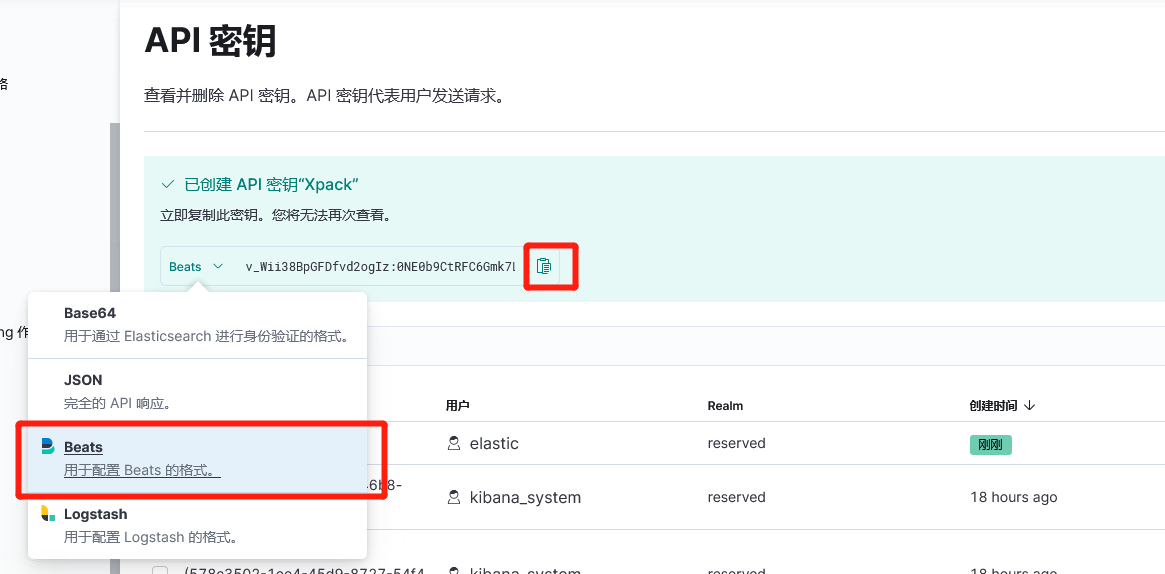

Xpack#

从Kibana上生成用于XPACK的API 密钥

最终配置

脚本启动#

启、停、状、重#

Logstash#

配置#

主配置#

扩展配置#

新建自定义的配置目录

新增配置文件

filter-dnsqtime.confinput-filebeat.confoutput-es.conf

input-filebeat.conf

filter-dnsqtime.conf

output-es.conf

创建用于Logstash输出到Elasticsearch的API密钥

API密钥:wfU5jH8BpGFDfvd2oQKb:WRy5BmpLQD-FexsyjzGQXA

脚本启动#

启、停、状、重#

数据缓存处理#

两台服务器都需要操作且相同

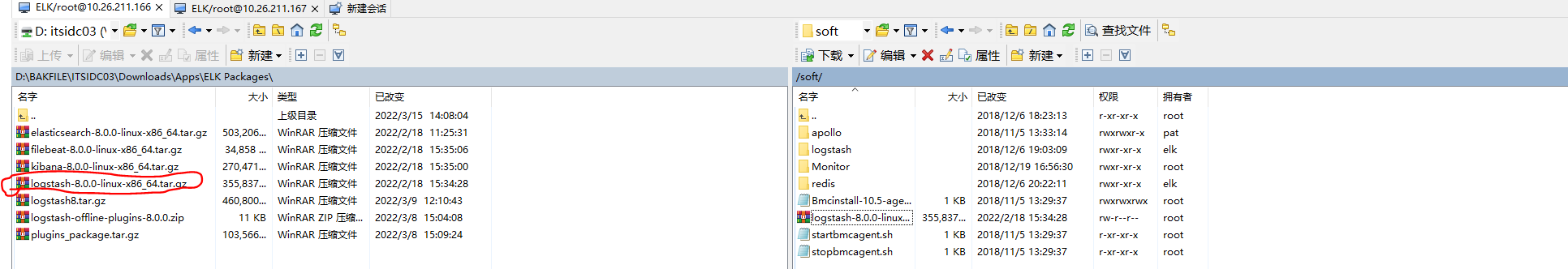

上传软件包#

部署logstash和redis

logstash-8.0.0-linux-x86_64.tar.gz -> /soft

10.26.211.16610.26.211.167

解压#

使用elk用户操作

重命名#

配置#

配置目录:/soft/logstash8/config

主配置#

logstash.yml#

10.26.211.166

10.26.211.167

扩展配置#

新建配置目录

新增配置文件#

filter-dnsserver.conf

input {redis {host => "10.26.211.166"password => "Red_elk1"data_type => "list"port => 6379key => "dnsserver"batch_count => 2000threads => 4}redis {host => "10.26.211.167"password => "Red_elk1"data_type => "list"port => 6379key => "dnsserver"batch_count => 2000threads => 4}}filter {if [fields][logindex] == "dnsserver"{json {source => "message"remove_field => ["message","beat.name","beat.version","offset"]}if "named" in [app-name] {if "6" in [syslogseverity]{grok {match => {"log_info" => "{\s+client %{IPV4:sourceip}#%{NUMBER:reqport} \(%{DATA:reqaddress}\): view %{WORD:viewtype}: query: (%{DATA:reqhost}(?<domain>([a-zA-Z0-9_-]{1,62})(\.(cn|com|net|dpca|tv|org|gov|info|la|cc|co)?(\.cn|jp|dpca)?))?) IN %{DATA:qtype} \(%{DATA:desip}\)}" }}}if "5" in [syslogseverity]{grok {match => {"log_info" => "{%{DATA:str1} %{IPV4:targetip}#%{NUMBER:port} %{DATA:action1}: %{DATA:detail} -- %{GREEDYDATA:reason}}"}}}}}}filter-olft.conf

input {redis {host => "10.26.211.166"password => "Red_elk1"data_type => "list"port => 6379key => "olft"batch_count => 2000threads => 4}redis {host => "10.26.211.167"password => "Red_elk1"data_type => "list"port => 6379key => "olft"batch_count => 2000threads => 4}}filter {if [fields][logindex] == "olft"{if [fields][doctype] == "olft_summary"{json {source => "message"remove_field => ["message"]}}if [fields][doctype] == "olft_detail"{json {source => "message"}date {match => [ "endTime" , "yyyyMMddHHmmss" ]add_field => {"hostname" => "%{[beat][hostname]}"}remove_field => ["beat","message"]}geoip {source => "ip"target => "geoip"fields => ["city_name","country_name","","location"]}mutate {convert => ["[fileSize]","integer"]}}}}input-filebeat.conf

input {redis {host => "10.26.211.166"password => "Red_elk1"data_type => "list"port => 6379key => "filebeat"batch_count => 2000threads => 4}redis {host => "10.26.211.167"password => "Red_elk1"data_type => "list"port => 6379key => "filebeat"batch_count => 2000threads => 4}}input-oslog.conf

input {redis {host => "10.26.211.166"password => "Red_elk1"data_type => "list"port => 6379key => "oslog"batch_count => 2000threads => 4}redis {host => "10.26.211.167"password => "Red_elk1"data_type => "list"port => 6379key => "oslog"batch_count => 2000threads => 4}}input-pvnserver.conf

input {redis {host => "10.26.211.166"password => "Red_elk1"data_type => "list"port => 6379key => "pvnserver"batch_count => 2000threads => 4}redis {host => "10.26.211.167"password => "Red_elk1"data_type => "list"port => 6379key => "pvnserver"batch_count => 2000threads => 4}}output-es.conf

创建一个用于数据输出的

API密钥i_4mm38B45nhiZLu0Rlm:pst0SURmS9eTWQDjEMD3OQoutput {if [fileset][module] == "system" or [fileset][module] =="apache2"{elasticsearch {api_key => "i_4mm38B45nhiZLu0Rlm:pst0SURmS9eTWQDjEMD3OQ"ssl => truessl_certificate_verification => falsehosts => ["10.26.211.168:9200","10.26.211.169:9200","10.26.211.170:9200","10.26.211.171:9200"]manage_template => false#index => "%{[@metadata][beat]}-%{[@metadata][version]}-%{+YYYY.MM.dd}"index => "syslog-%{[@metadata][version]}-%{+YYYY.MM.dd}"}}else if [fields][logindex] == "olft" {elasticsearch {api_key => "i_4mm38B45nhiZLu0Rlm:pst0SURmS9eTWQDjEMD3OQ"ssl => truessl_certificate_verification => falsehosts => ["10.26.211.168:9200","10.26.211.169:9200","10.26.211.170:9200","10.26.211.171:9200"]index => "logstash-%{[fields][logindex]}-%{[fields][doctype]}-%{+YYYYMM}"}}else {elasticsearch {api_key => "wfU5jH8BpGFDfvd2oQKb:WRy5BmpLQD-FexsyjzGQXA"ssl => truessl_certificate_verification => falsehosts => ["10.26.211.168:9200","10.26.211.169:9200","10.26.211.170:9200","10.26.211.171:9200"]index => "logstash-%{[fields][logindex]}-%{[fields][doctype]}-%{+YYYYMMdd}"}}}

脚本管理#

启、停、状、重#

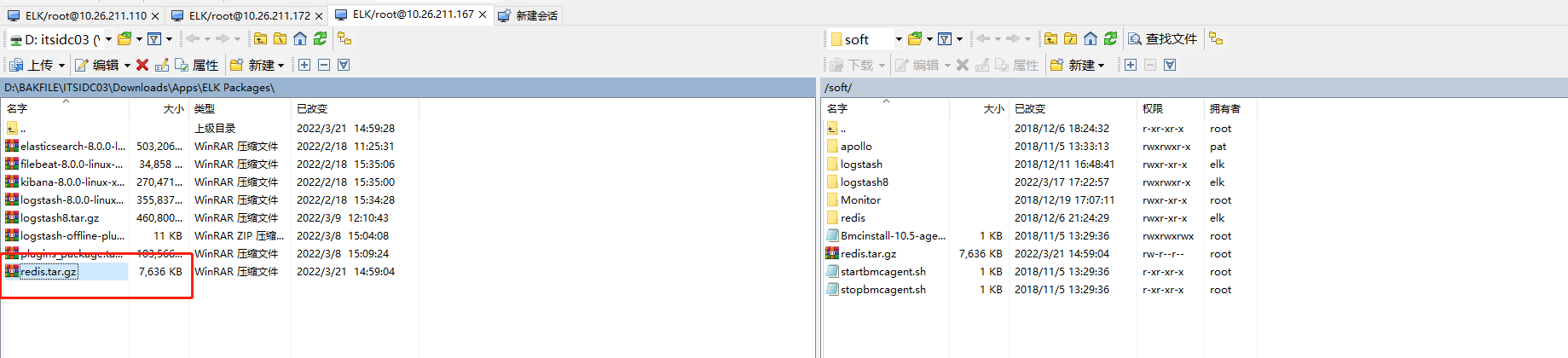

Redis部署#

10.26.211.16610.26.211.167

上传并解压缩#

配置脚本管理#

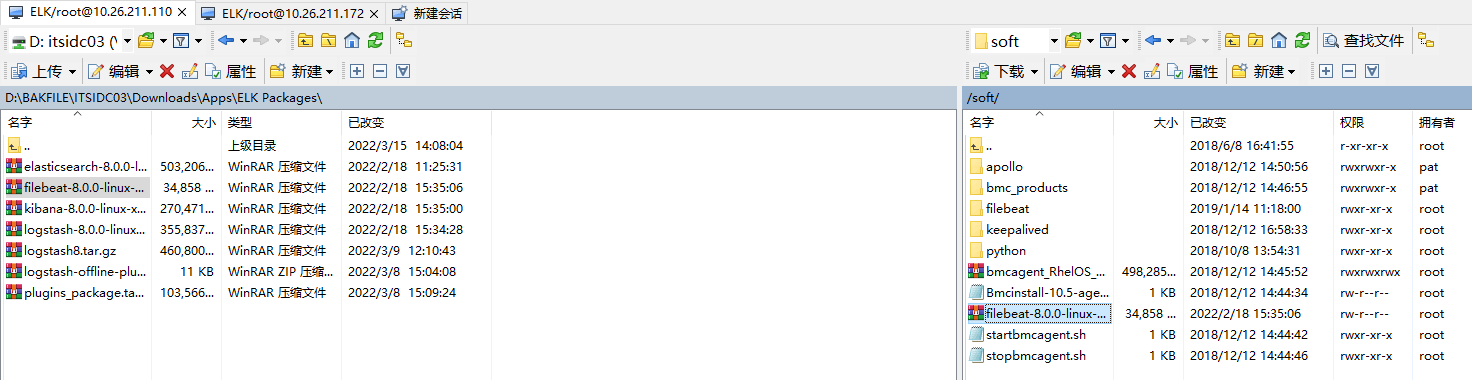

接受并处理syslog日志#

10.26.211.11010.26.211.172

上传软件#

解压缩#

重命名#

配置#

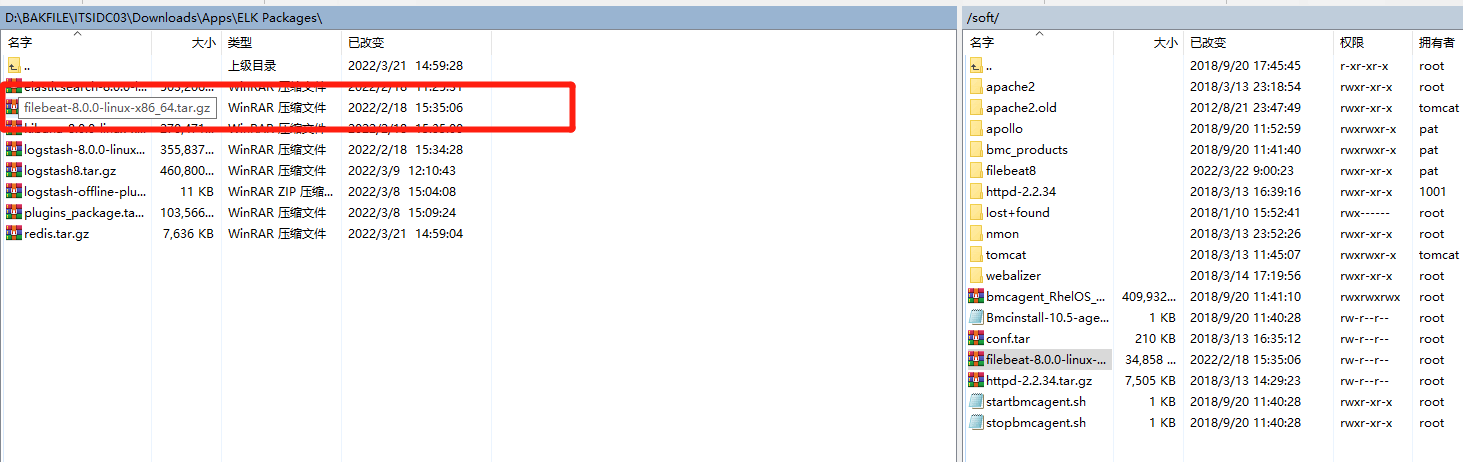

FileBeat 客户端部署#

支持收集Apache、Nginx访问日志并在Kibana中创建可视化图表

使用pat用户操作

上传软件包至/soft#

解压缩#

重命名#

配置#

初始化面板和管道至Kibana上#

配置模块#

模块目录:{filebeat_home}/modules.d

默认所有模块为禁用状态

Apache#

启用该模块

配置

确保

pat用户对 apache的access日志文件有访问权限,如果没有请使用root执行# chmod +r {access文件路径}

其他模块#

参考

管理脚本#

启、停、状、重#

Cisco日志收集#

- 10.26.211.110

上传Filebeat并解压缩至/soft目录,命名为filebeat_cisco